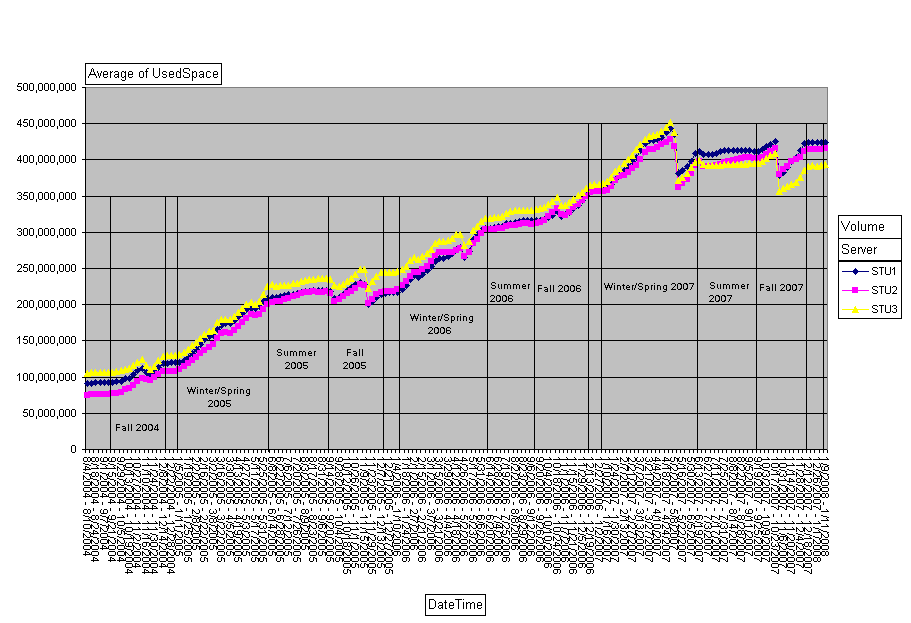

We've been having some abend issues on the cluster lately, something with the network services rather than file serving. It seems to be triggered more by iPrint/NDPS than by MyFiles, but both are associated with it. The abend itself is in WS2_32.NLM, so it's in the network stack. I have a call open with Novell.

I finally, finally managed to get a meaningful packet-capture after it fails, and I found some traffic that... doesn't look right. Take a look:

Note the three packets in the middle. The responding server is tearing down the connection twice for some reason. Compare this with a 'normal' tear-down:

The first example I gave is the last traffic on the wire before the server abends, so is of course highly suspicious. The pattern that leaps right out is that the responding server is issuing the FIN,PSH,ACK and RST,ACK pair, rather than the sending server, and doing so before the sending server can say "I got it" to the connection close packet.

Now I need to catch it in the act again to prove this theory.

I finally, finally managed to get a meaningful packet-capture after it fails, and I found some traffic that... doesn't look right. Take a look:

-> NCP Connection Destroy

<- R OK

<- FIN, PSH, ACK

<- RST, ACK

-> ACK (to R OK)

<- RST

Note the three packets in the middle. The responding server is tearing down the connection twice for some reason. Compare this with a 'normal' tear-down:

-> NCP Connection Destroy

<- R OK

-> FIN, PSH, ACK

<- ACK

-> RST, ACK

The first example I gave is the last traffic on the wire before the server abends, so is of course highly suspicious. The pattern that leaps right out is that the responding server is issuing the FIN,PSH,ACK and RST,ACK pair, rather than the sending server, and doing so before the sending server can say "I got it" to the connection close packet.

Now I need to catch it in the act again to prove this theory.