Novell is having a problem. We've known this for about 8 years now, but there we are. The future of operating systems at Novell is SLES and OES. Not NetWare. This, they tell us, doesn't matter much since OES looks so much like NetWare to end-users that it isn't much of a problem.

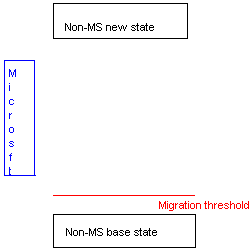

But this does overlook a sad fact in the market place. Take a look at this chart I drew up, 'leet drafting skills that they are:

What you see here is a base state, call it NetWare. At the far end is the new state, call it OES-Linux. And on the side you have a Great Attractor called "Microsoft". Also marked is a threshold that I call the migration threshold. This is the threshold that once crossed, a migration to a new platform is possible. The 'energy' driving the leap to a new state is twofold, money, and mind-share.

The problem is that anyone who is going to leap platforms has to overcome the attraction of Microsoft or end up on Microsoft. To put it in the context of the chart, you have to have enough energy to reach the desired (by us techies) new base-state to overcome the attraction of Microsoft.

In the tech world here, a lot of NetWare shops are still NetWare because the pain of moving to anything else outweighs the pain of staying put. Now that Novell has signed the death-warrant of NetWare in the phrase, "no further development," the pain of staying put is now greater than the pain of moving. So people are moving.

And falling into the gravity well that is Microsoft. And as I learned recently, that may actually happen here too. Scary thought.

But as I said earlier, the 'energy' is quantified by some combination of money and mind-share. Money will be required in any move, be it moving to a new licensing scheme, a complete repurchase of your whole infrastructure, or retraining all of your sysadmins on this new linux thingy. Mind-share is more complicated. If the management mind-share is that Novell is a dead-end product, that doesn't provide much energy at all. If management thinks that Novell has a future, that provides more of a boost.

But it just is one of those things in the marketplace these days that you have to provide a solid reason why you are not on Microsoft. Not why you are on it, but why you are NOT on it. As Novell said in one of the keynotes last week, only 90% of Novell's desktops run their Desktop Linux product, and only 50% of them do so exclusively. While 50% is quite a lot, it isn't anywhere near the penetration rate of Windows.

I think Novell is doing some good in providing alternatives to Windows, but we're still a decade away from any serious chinking at the armor. Vista will provide a migration point for Windows shops, but that'll be soon enough that the Microsoft gravity-well won't have been diminished all that much.

There is hope though. IBM was selling Microchannel well after it was clear that they had lost that battle. "You can't go wrong buying IBM" had fallen by the wayside. But it'll be several to many years before the "you can't go wrong buying Microsoft" goes away.

Tags: novell, netware

But this does overlook a sad fact in the market place. Take a look at this chart I drew up, 'leet drafting skills that they are:

What you see here is a base state, call it NetWare. At the far end is the new state, call it OES-Linux. And on the side you have a Great Attractor called "Microsoft". Also marked is a threshold that I call the migration threshold. This is the threshold that once crossed, a migration to a new platform is possible. The 'energy' driving the leap to a new state is twofold, money, and mind-share.

The problem is that anyone who is going to leap platforms has to overcome the attraction of Microsoft or end up on Microsoft. To put it in the context of the chart, you have to have enough energy to reach the desired (by us techies) new base-state to overcome the attraction of Microsoft.

In the tech world here, a lot of NetWare shops are still NetWare because the pain of moving to anything else outweighs the pain of staying put. Now that Novell has signed the death-warrant of NetWare in the phrase, "no further development," the pain of staying put is now greater than the pain of moving. So people are moving.

And falling into the gravity well that is Microsoft. And as I learned recently, that may actually happen here too. Scary thought.

But as I said earlier, the 'energy' is quantified by some combination of money and mind-share. Money will be required in any move, be it moving to a new licensing scheme, a complete repurchase of your whole infrastructure, or retraining all of your sysadmins on this new linux thingy. Mind-share is more complicated. If the management mind-share is that Novell is a dead-end product, that doesn't provide much energy at all. If management thinks that Novell has a future, that provides more of a boost.

But it just is one of those things in the marketplace these days that you have to provide a solid reason why you are not on Microsoft. Not why you are on it, but why you are NOT on it. As Novell said in one of the keynotes last week, only 90% of Novell's desktops run their Desktop Linux product, and only 50% of them do so exclusively. While 50% is quite a lot, it isn't anywhere near the penetration rate of Windows.

I think Novell is doing some good in providing alternatives to Windows, but we're still a decade away from any serious chinking at the armor. Vista will provide a migration point for Windows shops, but that'll be soon enough that the Microsoft gravity-well won't have been diminished all that much.

There is hope though. IBM was selling Microchannel well after it was clear that they had lost that battle. "You can't go wrong buying IBM" had fallen by the wayside. But it'll be several to many years before the "you can't go wrong buying Microsoft" goes away.

Tags: novell, netware